|

Llm_n(question) CPU times: user 5min 2s, sys: 4.17 s, total: 5min 6s Question = "What NFL team won the Super Bowl in the year Justin Bieber was born?" Llm_chain = LLMChain(prompt=prompt, llm=llm)

create language chain using prompt template and loaded model:.Llama_init_from_file: kv self size = 512.00 MB CPU times: user 572 ms, sys: 711 ms, total: 1.28 s Llama_model_load: model size = 4017.27 MB / num tensors = 291 Llama_model_load: loading tensors from './gpt4all-main/chat/gpt4all-lora-q-converted.bin' Llama_model_load: mem required = 5809.78 MB (+ 2052.00 MB per state) Llama_model_load: ggml ctx size = 81.25 KB Llama_model_load: ggml map size = 4017.70 MB Llama_model_load: loading model from './gpt4all-main/chat/gpt4all-lora-q-converted.bin' - please wait. Llm = LlamaCpp(model_path=GPT4ALL_MODEL_PATH) """ prompt = PromptTemplate(template=template, input_variables=) #įrom langchain import PromptTemplate, LLMChain gpt4all-main/chat/gpt4all-lora-q-converted.bin GPT4ALL_MODEL_PATH = "./gpt4all-main/chat/gpt4all-lora-q-converted.bin" langchain DemoĮxample of running a prompt using langchain.

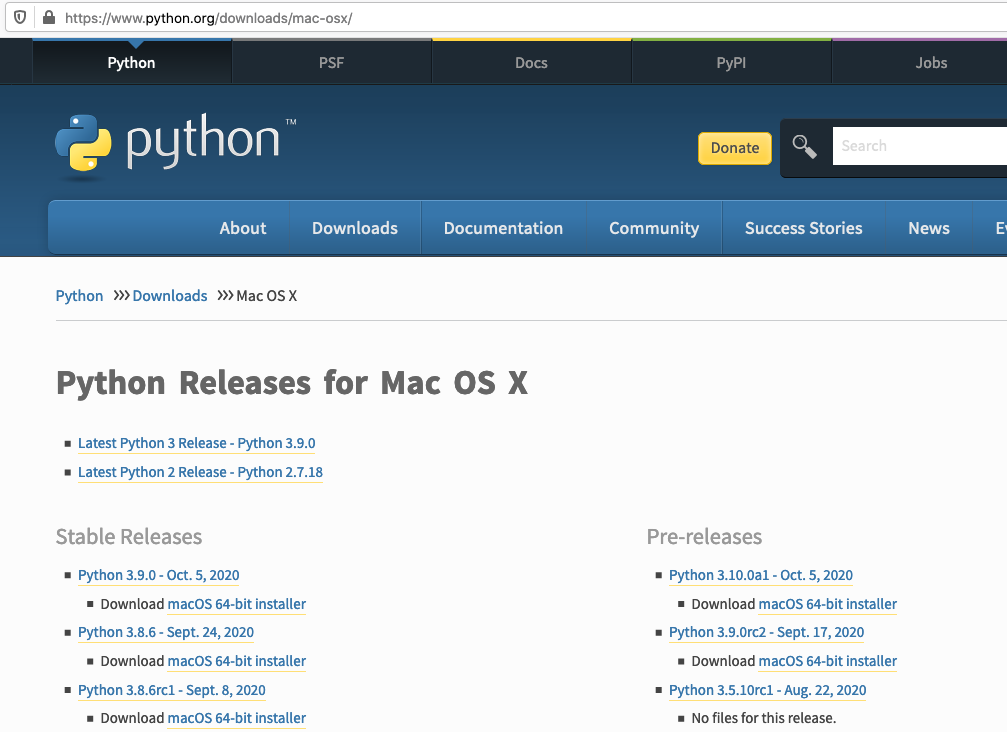

gpt4all-main/chat/gpt4all-lora-quantized.bin #!python3.10 -m llama.download -model_size 7B -folder llama/ Tested on a mid-2015 16GB Macbook Pro, concurrently running Docker (a single container running a sepearate Jupyter server) and Chrome with approx. GPT4All Langchain DemoĮxample of locally running GPT4All, a 4GB, llama.cpp based large langage model (LLM) under langchachain]( ), in a Jupyter notebook running a Python 3.10 kernel.

The following script can be downloaded as a Jupyter notebook from this gist. My laptop (a mid-2015 Macbook Pro, 16GB) was in the repair shop for over a week of that period, and it’s only really now that I’ve had a even a quick chance to play, although I knew 10 days ago what sort of thing I wanted to try, and that has only really become off-the-shelf possible in the last couple of days. Over the last three weeks or so I’ve been following the crazy rate of development around locally run large language models (LLMs), starting with llama.cpp, then alpaca and most recently (?!) gpt4all.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed